Infos:

- Used Zammad version: 7.0

- Used Zammad installation type: APT

- Operating system: Ubuntu 22.04

- Browser + version: Chrome

- AI: Ollama - llama3.2

Expected behavior:

- Ticket is renamed to something detailed / informative

Actual behavior:

- Ticket is renamed, but not in any meaningful way

Steps to reproduce the behavior:

We have a ticket kiosk which is basically just the form on a dedicated page. The tickets that come from it are all names “Help Kiosk Ticket”. I would like it to be named based on something in the body of the ticket.

Set the agent to run on tickets with that subject line, and it runs, but it doesn’t add any useful information.

Example:

Subject Help Kiosk Ticket

Body: I lost my charger and I need a replacement.

The subject gets changed to: Help Kiosk Ticket Request

I don’t want to go mucking about in the prompt since it seems like the Zammad team went through a lot of effort to dial in the prompts, but I’m wondering if there is something I should modify in that prompt to help.

That’s interesting. One would think that 128k context should be good enough.

I’ve ran it against Zammad AI, just to get a comparison, without knowing what model runs in the background and it returns this: Lost charger replacement request. That’s a good title in my opinion.

I then took the request from the logs that Zammad sent to the LLM and run it manually against a docker.io/llama3.2 model which basically returns the same title.

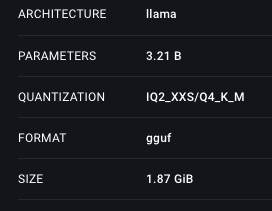

That’s it’s characteristics, executed on my Macbook locally:

Not sure if that helps, but double checking if you have the bigger model of the two running (or not). I’m of course assuming that the machine you’re executing this on has enough steam to run these models etc etc.

Another side note: Is there any articles beside the initial article inside the ticket the moment the agent is running?

It’s running against Ollama with llama3.2:latest which I believe pulls the 3B instruction model. I have given a context length of 8192. I’m going to increase to 128K to see if the performance is better and if it causes any issues.

The GPU only has 8GB of VRAM so I’m trying to balance model size and context window.

The ticket only has the initial article. I’m triggering on newly opened tickets with the generic ticket name that my kiosk gives.

This was fixed by moving to Gemma3, but then my ticket summaries and now coming back as incorrectly formatted text (each and every letter of the response get’s its own line)

In the end, prompts can always be improved, and prompts will also always work differently depending on the used LLM; it’s a very difficult situation.

For the summary problem, this means the LLM is not returning the correct structure. The conversation summary should be returned as an array of paragraphs. The output that you are seeing means that it’s only one string and not an array.

We also tested in the past with gemma3:27b, which was not bad, but this result structure change was afterwards, maybe not every LLM is doing a good job here, at least without tested LLMs, it was fine.

We may be able to improve this situation with a fallback. Let’s see, will think about it.