Infos:

- Used Zammad version: 5.0.3 (Channel Stable)

- Used Zammad installation type: (package via Ansible Role)

- Operating system: Ubuntu Server 20.04 LTS with CloudInit (Automatically installed via Terraform.)

- Browser + version: Mozilla Firefox 96.0.2 and Google Chrome 97.0.x (Windows NT 10.0; Win64; x64)

Expected behavior:

- Agents can work simultaneously without performance problems.

Actual behavior:

- When a certain number of agents (ca. 15) are connected to zammad, the system becomes very slow. Saving comments takes 20 to 60 seconds. After a reboot, the system responds very quickly until more users are working on the system again.

- Searching for tickets works very well even under load (ElasticSearch works without problems)

- The Production-Log or the Scheudluer-Log do not show any errors.

- The monitoring is always error-free.

Steps to reproduce the behavior:

- There are more than about 15 users working with the system.

Hardware

- Zammad is run on a virtualization cluster. (Proxmox)

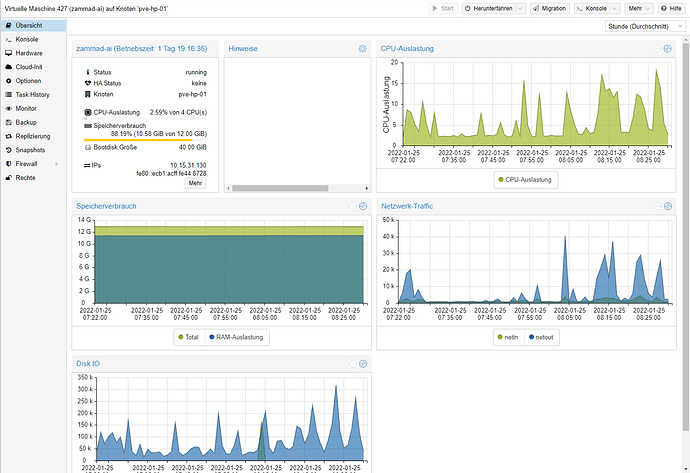

Average resource consumption.

-

CPU: Average CPU utilization is 5%, under load it is 10%. Looking at the Ubuntu, the Zammad scheduler jumps between 5% and 100% (One Second Long) CPU utilization. (Cluster has 48 x Intel(R) Xeon(R) CPU E5-2697 v2)

-

RAM: More RAM does not bring any improvement. ElasticSearch uses a MemoryLock so that nothing goes into the SWAP.

-

Storage: 40 GB (Data Center SSDs - Samsung SM883 - 540 MB/s Read and 520 MB/s Write)

Switching from HDD storage pool to SSD has brought a 30% speed advantage when saving replies / comments under load. -

Tested with the following hardware settings:

15 CPUs and 24 GB RAM

It did not bring any improvement.

State when the problem occurred:

zammad run rails r "p User.joins(roles: :permissions).where(roles: { active: true }, permissions: { name: 'ticket.agent', active: true }).uniq.count"

72

zammad run rails r "p Sessions.list.count"

41

zammad run rails r "p User.count"

529

zammad run rails r "p Overview.count"

46

zammad run rails r "p Group.count"

15

zammad run rails r 'p Delayed::Job.count'

0

zammad run rails r 'p Delayed::Job.first'

nil

Our configuration of Zammad:

The recommendations from the Zammad documentation were used.

Elasticsearch:

es_version: 7.16.3

es_heap_size: 4gb

es_plugins: ingest-attachment

es_config:

node.name: "zammad_es"

http.host: localhost

http.port: 9200

http.max_content_length: 400mb

indices.query.bool.max_clause_count: 2000

bootstrap.memory_lock: true

Postgres:

postgresql_hba_entries:

- { type: local, database: all, user: postgres, auth_method: trust }

- { type: local, database: all, user: all, auth_method: trust }

- { type: host, database: all, user: all, address: '127.0.0.1/32', auth_method: md5 }

- { type: host, database: all, user: all, address: '::1/128', auth_method: md5 }

postgresql_global_config_options:

- option: unix_socket_directories

value: '/var/run/postgresql'

- option: max_connections

value: 2000

- option: shared_buffers

value: '2GB'

- option: temp_buffers

value: '256MB'

- option: work_mem

value: '10MB'

- option: max_stack_depth

value: '5MB'

I think the bottleneck is the Postgres database, but the available resources are not being used. Can you help me with this problem?

Unfortunately, this problem was not noticed on the test system.

Best regards

Patrick