Infos:

- Used Zammad version: 2.8.0

- Used Zammad installation source: (source, package, …): package rpm

- Operating system: OpenSuse 42.3

- Browser + version: Chrome 71

Expected behavior:

- import runs through all tickets in old OTRS 3.3.7 System (roughly 100k Tickets)

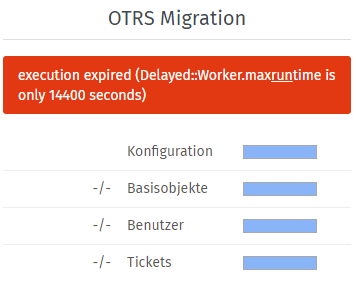

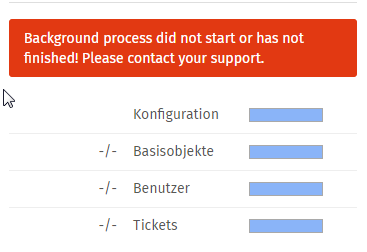

Actual behavior:

- Import fails ~1500 Tickets with Error. (Console Exits)

Until then the log looks fine to me… see below as example

thread#1: Ticket 98651, Article 465361 - Starting import for fingerprint f347f314d9a38ad7c56831ad7dca41829229ae82ca3b84ed8542b1a6b869c779 (file-2)… Queue: [1].

I, [2019-01-03T09:41:03.464027 #2885-47276350211760] INFO – : thread#4: add Ticket::Article.find_by(id: 463551)

I, [2019-01-03T09:41:03.466138 #2885-47276350215520] INFO – : thread#1: Ticket 98651, Article 465361 - Finished import for fingerprint f347f314d9a38ad7c56831ad7dca41829229ae82ca3b84ed8542b1a6b869c779 (file-2)… Queue: [1].

I, [2019-01-03T09:41:03.472511 #2885-47276350214820] INFO – : thread#2: Ticket 98796, Article 467619 - Starting import for fingerprint a3c7e83f85dfa0205d3980ca891ef82df9516999832c7fb0755e367168b9e347 (file-2)… Queue: [2].

I, [2019-01-03T09:41:03.473202 #2885-47276354371000] INFO – : thread#7: Ticket 98692, Article 463828 - Starting import for fingerprint ae6153a62144b9a7e9c8ec5f2b47e0bd7f30618e63665450835f605469cc92b3 (gwcheck.log)… Queue: [7].

I, [2019-01-03T09:41:03.485548 #2885-47276350215520] INFO – : thread#1: add Ticket::Article.find_by(id: 465384)

the following error is produced on the console when it exits (only thread 5 posted)

> thread#5: POST: http://helpdesk.xxxx.com/otrs/public.pl?Action=ZammadMigrator

> thread#5: PARAMS: {:Subaction=>"Export", :Object=>"Ticket", :Limit=>20, :Offset=>1200, :Diff=>0, :Action=>"ZammadMigrator", :Key=>"XXXXX"}

> thread#5: ERROR: Server Error: #<Net::HTTPInternalServerError 500 Internal Server Error readbody=true>!

> /opt/zammad/lib/import/otrs/requester.rb:132:in `post': Zammad Migrator returned an error (RuntimeError)

> from /opt/zammad/lib/import/otrs/requester.rb:92:in `request_json'

> from /opt/zammad/lib/import/otrs/requester.rb:79:in `request_result'

> from /opt/zammad/lib/import/otrs/requester.rb:34:in `load'

> from /opt/zammad/lib/import/otrs.rb:143:in `import_action'

> from /opt/zammad/lib/import/otrs.rb:137:in `imported?'

> from /opt/zammad/lib/import/otrs.rb:101:in `block (3 levels) in threaded_import'

> from /opt/zammad/lib/import/otrs.rb:95:in `loop'

> from /opt/zammad/lib/import/otrs.rb:95:in `block (2 levels) in threaded_import'

> from /opt/zammad/vendor/bundle/ruby/2.4.0/gems/logging-2.2.2/lib/logging/diagnostic_context.rb:474:in `block in create_with_logging_context'

on the apache error log of the OTRS machine I can see the following JSON Error: It happens exactly 6 times and with the 6th time it exits. (Timestamps of log above and below do not match but same errors appear, i just had the logs from the 2nd run handy and purged before)

> [Thu Jan 03 11:47:00 2019] [error] malformed or illegal unicode character in string [\xed\xa0\xbd\xed\xb8\x89\n \nGr], cannot convert to JSON at /opt/otrs/Kernel/cpan-lib/JSON.pm line 154.\n

> [Thu Jan 03 11:47:30 2019] [error] malformed or illegal unicode character in string [\xed\xa0\xbd\xed\xb8\x89\n \nGr], cannot convert to JSON at /opt/otrs/Kernel/cpan-lib/JSON.pm line 154.\n

> [Thu Jan 03 11:48:15 2019] [error] malformed or illegal unicode character in string [\xed\xa0\xbd\xed\xb8\x89\n \nGr], cannot convert to JSON at /opt/otrs/Kernel/cpan-lib/JSON.pm line 154.\n

> [Thu Jan 03 11:53:26 2019] [error] malformed or illegal unicode character in string [\xed\xa0\xbd\xed\xb8\x8a. Du], cannot convert to JSON at /opt/otrs/Kernel/cpan-lib/JSON.pm line 154.\n

> [Thu Jan 03 11:53:56 2019] [error] malformed or illegal unicode character in string [\xed\xa0\xbd\xed\xb8\x8a. Du], cannot convert to JSON at /opt/otrs/Kernel/cpan-lib/JSON.pm line 154.\n

> [Thu Jan 03 11:54:41 2019] [error] malformed or illegal unicode character in string [\xed\xa0\xbd\xed\xb8\x8a. Du], cannot convert to JSON at /opt/otrs/Kernel/cpan-lib/JSON.pm line 154.\n

Steps to reproduce the behavior:

- setup fresh Zammad

- Install OTRS migrator for 3.3

- manual import from console with

zammad run rails c Setting.set('import_otrs_endpoint', 'http://helpdesk.xxxx.com/otrs/public.pl?Action=ZammadMigrator') Setting.set('import_otrs_endpoint_key', 'XXXXXXX') Setting.set('import_mode', true) Import::OTRS.start

Any idea how to identify which tickets contain the supposed malformed unicode characters in OTRS or how to escape them properly during import?

Thanks Alex

and the import stops. We unfortunatly have some MIME Data in the Articles of our OTRS System… I would love to have an output of which article caused the failure (right now I go through the production log, and search for the thread that fails and then identify the culprit.

and the import stops. We unfortunatly have some MIME Data in the Articles of our OTRS System… I would love to have an output of which article caused the failure (right now I go through the production log, and search for the thread that fails and then identify the culprit. We’re working on an improved version which isn’t ready yet and will take some tome to be.

We’re working on an improved version which isn’t ready yet and will take some tome to be.